PREVIEW

One of the key standards of a decent learning procedure is to continue learning things a little over your present comprehension. On the off chance, if the subject is excessively similar to what you already know you end up not gaining much ground knowledge. Then again, if the subject is too complex you’ll slow down and gain next to nothing. Profound Learning includes a variety of subjects and things we have to adapt, so a decent procedure begins with taking a shot at something that individuals have already built for us. That is why, the frameworks of a project are very much essential. As they empower us to not think much about the subtleties of how to manufacture a model so that we can concentrate more on what we need to achieve, rather than concentrating on the best way to do it.

WHAT IS AllenNLP?

AllenNLP is a system that makes the assignment of building Deep Learning Models and Structures for Natural Language Processing something extremely enjoyable.

In case you’re chipping away at Natural Language Processing (NLP), you may have come across the term AllenNLP at least once. Given that, there might have been a few individuals who have used it or previously attempted to use it but weren’t successful because of its complexity involving too many functions. For those individuals, who aren’t acquainted with AllenNLP, this blog would give a concise review of the library and let you know how it works. AllenNLP is an open source library for making and assessing profound learning models for settling Natural Language Processing (NLP) issues and for creating NLP applications.

In case you’re chipping away at Natural Language Processing (NLP), you may have come across the term AllenNLP at least once. Given that, there might have been a few individuals who have used it or previously attempted to use it but weren’t successful because of its complexity involving too many functions. For those individuals, who aren’t acquainted with AllenNLP, this blog would give a concise review of the library and let you know how it works. AllenNLP is an open source library for making and assessing profound learning models for settling Natural Language Processing (NLP) issues and for creating NLP applications.

INTRODUCTION

AllenNLP is the profound learning library based on PyTorch for Natural Language Processing (NLP) built by the Allen Institute for Artificial Intelligence, which is one of the main research associations of Artificial Intelligence, in close collaboration with researchers at the University of Washington. With a committed group of best-in-field analysts and programming engineers, the AllenNLP venture is interestingly structured to furnish best in class models with top notch designing. Utilizing AllenNLP to develop a model, is a lot simpler than building a model by PyTorch right from scratch. Not only does it provides a comprehensive development but also backs the administration of the tests and its assessment after improvement. AllenNLP has the element to concentrate on research advancement. All the more explicitly, it makes it conceivable to prototype the model rapidly and makes it simpler to deal with the examinations with a variety of parameters.

by the Allen Institute for Artificial Intelligence, which is one of the main research associations of Artificial Intelligence, in close collaboration with researchers at the University of Washington. With a committed group of best-in-field analysts and programming engineers, the AllenNLP venture is interestingly structured to furnish best in class models with top notch designing. Utilizing AllenNLP to develop a model, is a lot simpler than building a model by PyTorch right from scratch. Not only does it provides a comprehensive development but also backs the administration of the tests and its assessment after improvement. AllenNLP has the element to concentrate on research advancement. All the more explicitly, it makes it conceivable to prototype the model rapidly and makes it simpler to deal with the examinations with a variety of parameters.

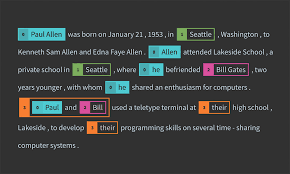

In AllenNLP, we ought to pursue the improvement and analysis stream beneath. As per one’s own examination/research venture, you just need to actualize Dataset Reader and Model, and after that run your different trials with config documents. Fundamentally, AllenNLP is build on the given principles –

Hyper-secluded and lightweight, thus allowing everyone to utilize the part one likes with PyTorch.

Broadly tested and simple to broaden, test inclusion is above 90% and example models give a format for implementation on different ventures.

Pays attention to cushioning and veiling, making it easier to execute the right models.

Experiment friendly and runs reproducible experiments from a JSON specification with comprehensive logging.

Outline

In the event that you know about PyTorch, the general structure of AllenNLP will feel known to you. Each one of these components is approximately coupled, which means it is anything but difficult to swap various models and Dataset Readers without changing different pieces of your code. Regardless of this, all these components work very well together. To exploit each one of the highlights accessible to you, you’ll have to comprehend what every part is in charge of and what conventions it must regard. This is the thing that we will talk about in the accompanying areas, beginning with the Dataset Reader. The essential AllenNLP pipeline is made out of the accompanying components:

DatasetReader –In order to provide AllenNLP the information dataset, and how to peruse from it we set the ‘dataset_reader’ key in the JSON document. A Dataset Reader peruses information from a particular location and develops a Dataset. All parameters important to peruse the information separated from the file path ought to be passed to the constructor of the Dataset Reader. The Dataset Reader takes crude dataset as information and applies the preprocessing like lowercasing, tokenization, etc.

DatasetReader –In order to provide AllenNLP the information dataset, and how to peruse from it we set the ‘dataset_reader’ key in the JSON document. A Dataset Reader peruses information from a particular location and develops a Dataset. All parameters important to peruse the information separated from the file path ought to be passed to the constructor of the Dataset Reader. The Dataset Reader takes crude dataset as information and applies the preprocessing like lowercasing, tokenization, etc.

Field – Field objects in AllenNLP relate to contributions or inputs to a model or field in a clump that is sustained into a model, depending upon how you look like at it. For each Field, the model will get solitary information or a single input. Each field handles changing over the information into tensors, so on the off chance if you have to do some extravagant changes to your information when changing over it into tensor structure, you ought to likely compose your own custom Field class.

The Model -To indicate the model we’ll set the ‘model’ key. There are three additional parameters inside the ‘model’ key: ‘model_text_field_embedder’, ‘internal_text_encoder’ and ‘classifier_feedforward’. With the key ‘model_text_field_embedder’ we disclose to AllenNLP how the information ought to be encoded before passing it to the model. The Model accepts the information and yields the consequences of the forward calculation and the assessment measurements.

The Model -To indicate the model we’ll set the ‘model’ key. There are three additional parameters inside the ‘model’ key: ‘model_text_field_embedder’, ‘internal_text_encoder’ and ‘classifier_feedforward’. With the key ‘model_text_field_embedder’ we disclose to AllenNLP how the information ought to be encoded before passing it to the model. The Model accepts the information and yields the consequences of the forward calculation and the assessment measurements.

The Information Iterator– We need to isolate the preparation information in clumps and divide it into batches. AllenNLP gives an iterator called Bucket Iterator that makes the calculations (cushioning) increasingly productive by cushioning groups regarding the greatest information lengths per cluster. To do that ,it sorts the occurrences by the quantity of tokens in every content. These parameters are set in the ‘iterator’ key.

Trainer- AllenNLP gives a Trainer class that expels the need of standard code and gives everyone sorts of function ability, including access to Tensor board, extraordinary compared to other representation/investigating devices for preparing neural systems.

CONCLUSION

AllenNLP is genuinely an ingenious piece of programming. It is anything but difficult to utilize, simple to tweak, and improves the nature of the code you keep. The most ideal approach to adapt AllenNLP is to really apply it to some issue you need to unravel. The documentation is an incredible wellspring of data, and so I feel that occasionally perusing the code is a lot quicker compared to searching for another alternative.

For more unique and technical knowledge, Visit https://blarrow.tech/category/blog/